As you know that MySQL 5.7 is going to be in its end of life this month (when I wrote this article) and many businesses are upgrading to 8 ( except few ones, believe me its true) . Many cloud vendors are providing extra support and time to their customer so that they can move to 8. One of the major change in 8 is the default character type and collation. Previously the default one was latin1 and also the alias of utf8 was utf8mb3 but now it is utf8mb4. There are plenty of articles from many database service providers that says no data and table structural changes are required. Although it is true to some extent but there are some major changes, if being missed can cause your application to behave weirdly.

Lets go basic first !!

What is a character set ?

A character is a letter, let say A,B,C . Now I want to encode these letters i.e. A can be represented by the ! and B can be represented by the $. If I combine the letter and their encoding it can be called as a character set.

There are total 41 character set in 8.0.33. I wont go in detail of every character set as this is out of topic but still if you are more interested in reading about it, this is a manual. This link will also explain how utf8mb4 is different from the utf8mb3 and other character set and how man bytes does it take to store a single character.

mysql> select version();+-----------+| version() |+-----------+| 8.0.33 |+-----------+1 row in set (0.00 sec)mysql> select count(1) from information_schema.CHARACTER_SETS;+----------+| count(1) |+----------+| 41 |+----------+1 row in set (0.00 sec)

What is a collation ?

Above I mentioned that if I replace A by ! , then I am imposing rule . This rule is called as a collation. This is a very simple type of collation. Collation can be much more complex, depending on the type of rules one have created.

In total there are 286 collations in 8.0.33. There can be multiple types of collation for one character set for different languages. For example , see below

mysql> select * from collations where CHARACTER_SET_NAME='utf8mb3';+-----------------------------+--------------------+-----+------------+-------------+---------+---------------+| COLLATION_NAME | CHARACTER_SET_NAME | ID | IS_DEFAULT | IS_COMPILED | SORTLEN | PAD_ATTRIBUTE |+-----------------------------+--------------------+-----+------------+-------------+---------+---------------+| utf8mb3_general_ci | utf8mb3 | 33 | Yes | Yes | 1 | PAD SPACE || utf8mb3_tolower_ci | utf8mb3 | 76 | | Yes | 1 | PAD SPACE || utf8mb3_bin | utf8mb3 | 83 | | Yes | 1 | PAD SPACE || utf8mb3_unicode_ci | utf8mb3 | 192 | | Yes | 8 | PAD SPACE || utf8mb3_icelandic_ci | utf8mb3 | 193 | | Yes | 8 | PAD SPACE || utf8mb3_latvian_ci | utf8mb3 | 194 | | Yes | 8 | PAD SPACE || utf8mb3_romanian_ci | utf8mb3 | 195 | | Yes | 8 | PAD SPACE || utf8mb3_slovenian_ci | utf8mb3 | 196 | | Yes | 8 | PAD SPACE || utf8mb3_polish_ci | utf8mb3 | 197 | | Yes | 8 | PAD SPACE || utf8mb3_estonian_ci | utf8mb3 | 198 | | Yes | 8 | PAD SPACE || utf8mb3_spanish_ci | utf8mb3 | 199 | | Yes | 8 | PAD SPACE || utf8mb3_swedish_ci | utf8mb3 | 200 | | Yes | 8 | PAD SPACE || utf8mb3_turkish_ci | utf8mb3 | 201 | | Yes | 8 | PAD SPACE || utf8mb3_czech_ci | utf8mb3 | 202 | | Yes | 8 | PAD SPACE || utf8mb3_danish_ci | utf8mb3 | 203 | | Yes | 8 | PAD SPACE || utf8mb3_lithuanian_ci | utf8mb3 | 204 | | Yes | 8 | PAD SPACE || utf8mb3_slovak_ci | utf8mb3 | 205 | | Yes | 8 | PAD SPACE || utf8mb3_spanish2_ci | utf8mb3 | 206 | | Yes | 8 | PAD SPACE || utf8mb3_roman_ci | utf8mb3 | 207 | | Yes | 8 | PAD SPACE || utf8mb3_persian_ci | utf8mb3 | 208 | | Yes | 8 | PAD SPACE || utf8mb3_esperanto_ci | utf8mb3 | 209 | | Yes | 8 | PAD SPACE || utf8mb3_hungarian_ci | utf8mb3 | 210 | | Yes | 8 | PAD SPACE || utf8mb3_sinhala_ci | utf8mb3 | 211 | | Yes | 8 | PAD SPACE || utf8mb3_german2_ci | utf8mb3 | 212 | | Yes | 8 | PAD SPACE || utf8mb3_croatian_ci | utf8mb3 | 213 | | Yes | 8 | PAD SPACE || utf8mb3_unicode_520_ci | utf8mb3 | 214 | | Yes | 8 | PAD SPACE || utf8mb3_vietnamese_ci | utf8mb3 | 215 | | Yes | 8 | PAD SPACE || utf8mb3_general_mysql500_ci | utf8mb3 | 223 | | Yes | 1 | PAD SPACE |+-----------------------------+--------------------+-----+------------+-------------+---------+---------------+28 rows in set (0.01 sec)

Total number of collations in 8 :mysql> select count(*) from COLLATIONS;+----------+| count(*) |+----------+| 286 |+----------+1 row in set (0.00 sec)mysql> select version();+-----------+| version() |+-----------+| 8.0.33 |+-----------+1 row in set (0.00 sec)

Ok, Now going back to the original topic of our blog:

In collation table above, there is a column name known as “pad attribute” . Each collation has a property which talks about the PAD_ATTRIBUTE i.e. if a string can have trailing space (a space after string) as its own part (without considering it separately) or should it be treated as its additional character. It may sounds confusing now but I will clear it further as this is a main topic of this article.

How pad attribute makes the difference while upgrading ?

In many cases if we were using latin1 or utf8 (which was an alias of utf8mb3) as the character set of our tables in 5.7, most probably we are using latin1_swedish_ci or utf8mb3_general_ci as its collation type because these are the default one and rarely we do change it until unless we have some specific business needs. Now lets talk about a case wherein we have a table with character set as utf8mb3

mysql> show create table check_mb3\G;*************************** 1. row *************************** Table: check_mb3Create Table: CREATE TABLE `check_mb3` ( `id` int DEFAULT NULL, `name` char(10) DEFAULT NULL, UNIQUE KEY `name` (`name`)) ENGINE=InnoDB DEFAULT CHARSET=utf8mb31 row in set (0.01 sec)mysql> insert into check_mb3 values(1,'ankit');Query OK, 1 row affected (0.00 sec)mysql> insert into check_mb3 values(1,'ankit ');ERROR 1062 (23000): Duplicate entry 'ankit' for key 'check_mb3.name'

mysql> insert into check_mb3 values(2,'guga');Query OK, 1 row affected (0.00 sec)mysql> insert into check_mb3 values(3,'guga ');ERROR 1062 (23000): Duplicate entry 'guga' for key 'check_mb3.name'

mysql> insert into check_mb3 values(4,'victor');Query OK, 1 row affected (0.00 sec)mysql> insert into check_mb3 values(5,'victor ');ERROR 1062 (23000): Duplicate entry 'victor' for key 'check_mb3.name'

Until now, everything went as expected and we are not able to insert the same string even if it has trailing spaces which means, trailing space has been removed when being stored on disk and thus both strings (‘ankit’ & ‘ankit ‘) are same for the user or client . Now let’s try to migrate our tables to 8 by changing the character set since utf8mb4 is default one and comes with collations which has NO PAD option.

mysql> alter table check_mb3 CHARACTER SET UTF8mb4;Query OK, 0 rows affected (0.01 sec)Records: 0 Duplicates: 0 Warnings: 0mysql> alter table check_mb3 modify name char(10) CHARACTER SET utf8mb4 collate utf8mb4_0900_ai_ci;Query OK, 3 rows affected (0.03 sec)Records: 3 Duplicates: 0 Warningsmysql> show create table check_mb3\G;*************************** 1. row *************************** Table: check_mb3Create Table: CREATE TABLE `check_mb3` ( `id` int DEFAULT NULL, `name` char(10) CHARACTER SET utf8mb4 collate utf8mb4_0900_ai_ci DEFAULT NULL, UNIQUE KEY `name` (`name`)) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_0900_ai_ci1 row in set (0.00 sec)mysql> insert into check_mb3 values(4,'victor ');ERROR 1062 (23000): Duplicate entry 'victor' for key 'check_mb3.name'mysql> select * from check_mb3;+------+--------+| id | name |+------+--------+| 1 | ankit || 2 | guga || 3 | victor |+------+--------+3 rows in set (0.00 sec)

OOPS !!, we didn’t expect this, but we are fine in this case when we are using data type as CHAR. This is because as per SQL standards, while storing the CHAR data, both string should be treated as same regardless of the collation but not while READING. Keep on reading further.

but lets think of a use case wherein our business logic says that

“every string should end with a trailing space”

and hence accordingly we will insert a string with a space for this test

mysql> insert into check_mb3 values(7,'ankittf84 ');Query OK, 1 row affected (0.01 sec)--------and then we read the same string with space----mysql> select * from check_mb3 where name='ankittf84 ';Empty set (0.00 sec)

Here our application has started behaving weird and user will assume that our string doesn’t exists. However if we do same in utf8mb3 collation (which was default in MySQL 5.7) the above read will return the data because while reading it treats both string as different. I repeat , while reading it treats both string as different but not while storing because the data is being stored identically, but when read, InnoDB do the comparison based on collation type i.e. trim space or consider space.

mysql> show create table check_mb3_again;+-----------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+| Table | Create Table |+-----------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+| check_mb3_again | CREATE TABLE `check_mb3_again` ( `id` int DEFAULT NULL, `name` char(10) CHARACTER SET utf8mb3 COLLATE utf8mb3_general_ci DEFAULT NULL, UNIQUE KEY `name` (`name`)) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_0900_ai_ci |+-----------------+--------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+1 row in set (0.00 sec)mysql> select COLLATION_NAME,PAD_ATTRIBUTE from information_schema.collations where COLLATION_NAME='utf8mb3_general_ci';+--------------------+---------------+| COLLATION_NAME | PAD_ATTRIBUTE |+--------------------+---------------+| utf8mb3_general_ci | PAD SPACE |+--------------------+---------------+mysql> insert into check_mb3_again values (1,'ankit ');Query OK, 1 row affected (0.00 sec)mysql> select * from check_mb3_again where name='ankit ';+------+-------+| id | name |+------+-------+| 1 | ankit |+------+-------+1 row in set (0.00 sec)

Im sure you must be thinking “why even any application will search like this” and I would be completely agree with you. But hold on, I explained this just to setup the background.

NOW, major question is how migration to 8 will corrupt the data while writing ?

Lets try to understand with an example wherein same table structure but with VARCHAR data type.

1. Keep utf8mb3 for now which is default in 5.7 :

mysql> CREATE TABLE check_mb3_again_withvarchar (name varchar(10)) character set utf8mb3 collate utf8mb3_general_ci ;Query OK, 0 rows affected, 2 warnings (0.02 sec)mysql> alter table check_mb3_again_withvarchar modify name varchar(10) unique key;Query OK, 0 rows affected (0.01 sec)Records: 0 Duplicates: 0 Warnings: 0mysql> insert into check_mb3_again_withvarchar values('ankit');Query OK, 1 row affected (0.01 sec)mysql> insert into check_mb3_again_withvarchar values('ankit ');ERROR 1062 (23000): Duplicate entry 'ankit ' for key 'check_mb3_again_withvarchar.name'

So this is expected that even if application or web, sends the same string with trailing space, it will be rejected.

Now its time to migrate the table to 8 using mb4 collation.

2. Change table and column to utf8mb4 for now which is default in 8:

mysql> alter table check_mb3_again_withvarchar charset utf8mb4;Query OK, 0 rows affected (0.01 sec)Records: 0 Duplicates: 0 Warnings: 0mysql> alter table check_mb3_again_withvarchar modify name varchar(10) CHARACTER SET utf8mb4 collate utf8mb4_0900_ai_ci;Query OK, 1 row affected (0.02 sec)Records: 1 Duplicates: 0 Warnings: 0mysql> show create table check_mb3_again_withvarchar;+-----------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+| Table | Create Table |+-----------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+| check_mb3_again_withvarchar | CREATE TABLE `check_mb3_again_withvarchar` ( `name` varchar(10) CHARACTER SET utf8mb4 COLLATE utf8mb4_0900_ai_ci DEFAULT NULL, UNIQUE KEY `name` (`name`)) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_0900_ai_ci |+-----------------------------+----------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------------+1 row in set (0.00 sec)

Ok, so we have migrated the table successfully, lets continue with our writes –

mysql> insert into check_mb3_again_withvarchar values('ankit ');Query OK, 1 row affected (0.00 sec)mysql> select * from check_mb3_again_withvarchar;+--------+| name |+--------+| ankit || ankit |+--------+2 rows in set (0.00 sec)

So, now what would you say here and your data became inconsistent and you are gone into a nightmare without even realizing this and you will realize it after years or months ???

Every problem has a solution :

I learnt in my life that every problem has a solution and success is, how well you solve your problems.

Initially I mentioned that there are multiple collations being supported by one character set, we can select the one we need. By “need” I mean several factors like the type of character we need, do we need to compare the strings without spaces or with spaces.

Considering the specific case mentioned in this topic, if we are looking to opt utf8mb4 but with PAD SPACES, we can choose one from collation table.

Lets talk with an example :

mysql> select COLLATION_NAME,PAD_ATTRIBUTE from information_schema.collations where CHARACTER_SET_NAME='utf8mb4' and PAD_ATTRIBUTE='PAD SPACE';+------------------------+---------------+| COLLATION_NAME | PAD_ATTRIBUTE |+------------------------+---------------+| utf8mb4_general_ci | PAD SPACE || utf8mb4_bin | PAD SPACE || utf8mb4_unicode_ci | PAD SPACE || utf8mb4_icelandic_ci | PAD SPACE || utf8mb4_latvian_ci | PAD SPACE || utf8mb4_romanian_ci | PAD SPACE || utf8mb4_slovenian_ci | PAD SPACE || utf8mb4_polish_ci | PAD SPACE || utf8mb4_estonian_ci | PAD SPACE || utf8mb4_spanish_ci | PAD SPACE || utf8mb4_swedish_ci | PAD SPACE || utf8mb4_turkish_ci | PAD SPACE || utf8mb4_czech_ci | PAD SPACE || utf8mb4_danish_ci | PAD SPACE || utf8mb4_lithuanian_ci | PAD SPACE || utf8mb4_slovak_ci | PAD SPACE || utf8mb4_spanish2_ci | PAD SPACE || utf8mb4_roman_ci | PAD SPACE || utf8mb4_persian_ci | PAD SPACE || utf8mb4_esperanto_ci | PAD SPACE || utf8mb4_hungarian_ci | PAD SPACE || utf8mb4_sinhala_ci | PAD SPACE || utf8mb4_german2_ci | PAD SPACE || utf8mb4_croatian_ci | PAD SPACE || utf8mb4_unicode_520_ci | PAD SPACE || utf8mb4_vietnamese_ci | PAD SPACE |+------------------------+---------------+

CREATE TABLE `check_mb4_withvarchar` ( `id` int DEFAULT NULL, `name` varchar(10) CHARACTER SET utf8mb4 COLLATE utf8mb4_general_ci DEFAULT NULL, UNIQUE KEY `name` (`name`)) ENGINE=InnoDB DEFAULT CHARSET=utf8mb4 COLLATE=utf8mb4_0900_ai_ci;

Have you noticed why I have chosen utf8mb4_general_ci which is not a default one ? This is because, this collation comes with PAD SPACE i.e. trailing space will be considered same as WITHOUT SPACE in VARCHAR.

Now, insert some data to confirm our understanding :

mysql> insert into check_mb4_withvarchar values (1,'ankit');Query OK, 1 row affected (0.01 sec)mysql> insert into check_mb4_withvarchar values (1,'ankit ');ERROR 1062 (23000): Duplicate entry 'ankit ' for key 'check_mb4_withvarchar.name

AND this is what I really needed to prove that how one space can corrupt your entire data while upgrading to 8.

So, what is important ?

It is extremely important to understand the behaviour of your data and how does the write operations actually writing the data before planning your migration as such issues looks invisible in beginning but after few months or years, it mess up your data. So, yes deciding character set and collation is extremely important for your data.

Meet me here on linkedin.

Note – This is fork from my previous article but on new domain and with new subject line.

Mutex & MetaData lock handling with Performance and Sys Schema in MySQL

Read about most important part of MySQL. Mutex and meta data locks

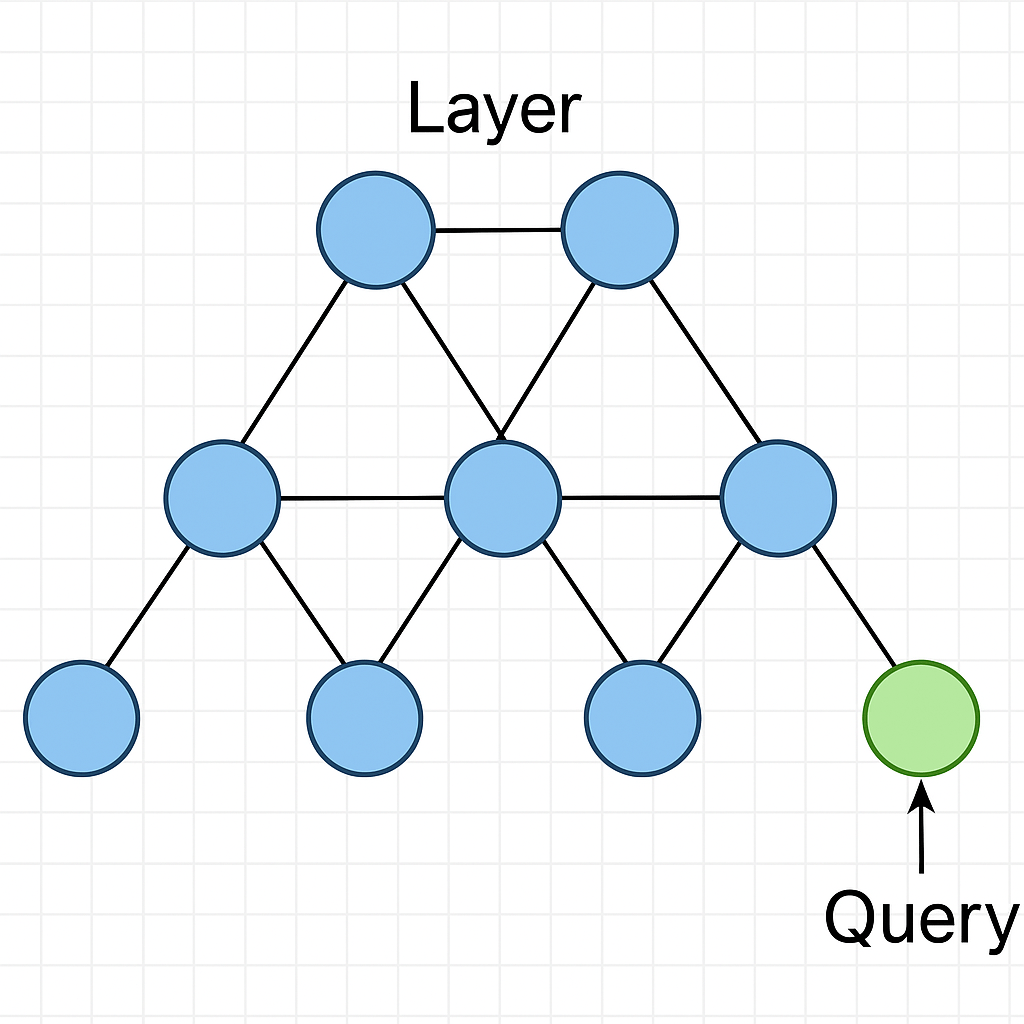

What’s changed in building of Innodb’s b-tree indexes in MySQL 8

This article discuss about how innodb build b tree index and how things improved with 8

Redo flushing. MySQL VS Postgres and Redo in 8/9

One of my earlier post MySQL Innodb – Internal Transaction flow and its related video talks about a transaction flow which gives the internal concept of transaction flow in Innodb. In this article I am going to talk about how the Redo log ( WAL in PG ) flushing is different in MySQL and Postgres and…

You must be logged in to post a comment.