Have you ever divided a single physical book into rather sub-books because the book was too heavy and it is not even easy to quickly navigate to a specific page?

Similarly, partitioning a table is like you are dividing one physical table into various sub-tables (on the same storage and same data directory) to have better storage management, better performance (in some cases), and better archival policies. We can partition a table in various ways. The process is, first we have to choose a partitioning key or partitioning keyS i.e. a composite partition key in which multiple columns are involved. By partitioning key I mean we need to choose a column/columns basis on which we can divide the table.

Let’s say we have the below popular table employees_range. This table is divided into two sub-parts i.e. p0 and p1. If you insert a record with an id less than 100, that particular record will insert in the p0 partition. This is the simplest form of partitioning we can have in our database server and is known as range partitioning. However such type of partitioning has already been covered by multiple articles and is not a topic to be covered under this article.

Whenever you are creating a partition, table structure matters a lot. For example, if you have a primary key and a unique key in the table and you are planning to partition your table, then the partition key should be present in all the keys i.e. either a unique key or as a primary key.

This blog is focused on hash partitioning. If you will understand this, you can also replicate your understanding in the distributed systems where database is sharded into several shards and you can also understand that how data is being written into the respective shards.

Hash partition :

create table t1 (col1 int not null,col2 date not null,col3 int not null,col4 int not null,unique key(col1,col2,col3)) partition by hash(col3) partitions 4;This will divide the table into 6 different sub tables.Numeric 6 means the number of partitions we need. In hash partitioning we doesn’t define the path of data explicitly (by path of data I mean, in which partition, a particular insertion shall take place) as we do in the range partitioning. Hash is a function in computer science/math which transforms an arbitrary string into a fixed size of output.

Now hashing can be of different types.

MySQL, by default uses modulous hashing (linear hashing is different) i.e. x % y where x is the value of partitioning key and y is the number of partition . For example 9 % 5 which means divide 9 by 5 will give remainder as 4 so that will be the hashing. Similarly in above example hashing will be based on the col3 values. Lets try to insert some value

mysql> insert into t1 values (1,NOW(),300,1);Query OK, 1 row affected, 1 warning (0.00 sec)mysql> insert into t1 values (1,NOW(),191,1);

Query OK, 1 row affected, 1 warning (0.01 sec)mysql> insert into t1 values (1,NOW(),0,1);

Query OK, 1 row affected, 1 warning (0.00 sec)mysql> select * from t1 partition(p3);+------+------------+------+------+

| col1 | col2 | col3 | col4 |

+------+------------+------+------+

| 1 | 2024-04-01 | 191 | 1 |

+------+------------+------+------+1 row in set (0.00 sec)mysql> select * from t1 partition(p0);

+------+------------+------+------+

| col1 | col2 | col3 | col4 |

+------+------------+------+------+

| 1 | 2024-04-01 | 0 | 1 |

| 1 | 2024-04-01 | 300 | 1 |

+------+------------+------+------+

2 rows in set (0.00 sec)

300/4 = 0 , so that means data will insert in the first partition.

191/4=3, this will go in 3rd partition

0/4=0, this will go in first partition.

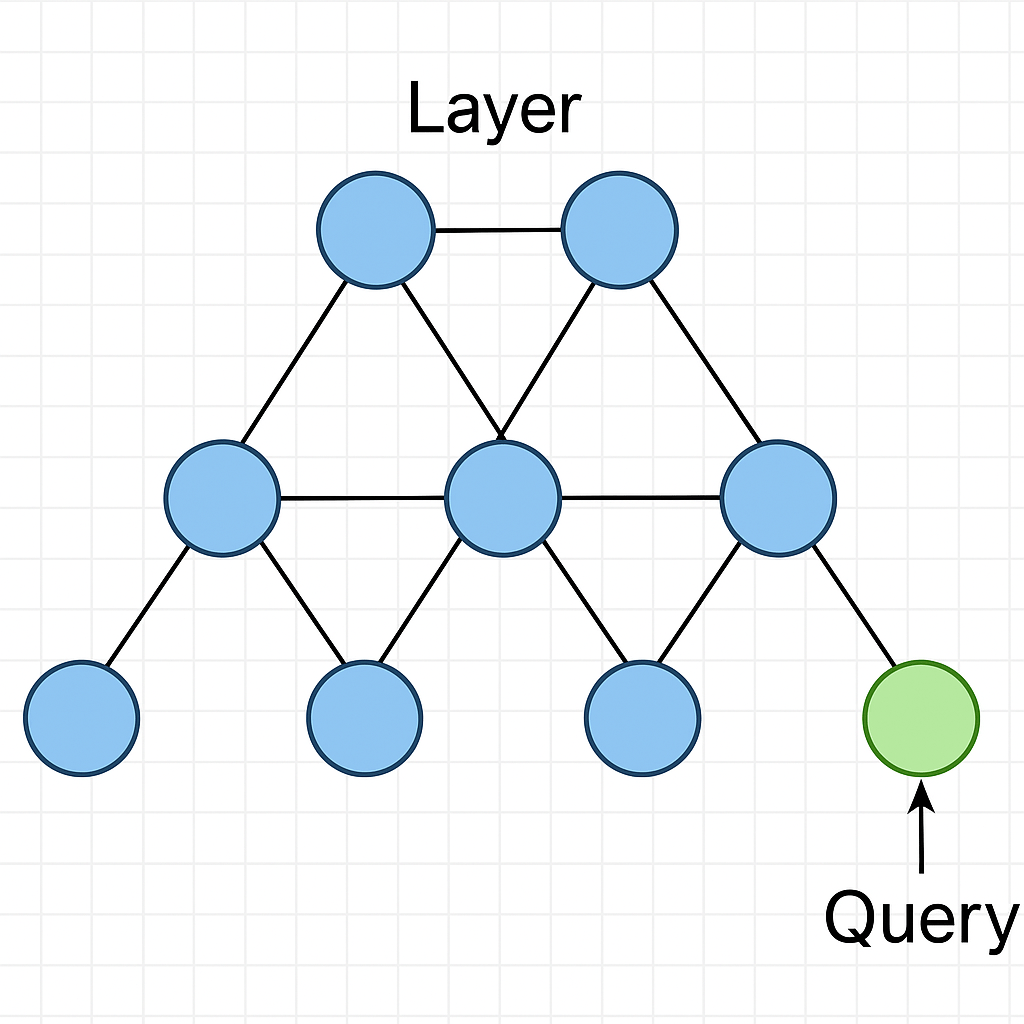

This is the most simplest form of partitioning you will encounter. Same concept can also be applied on multi-tenant databases where databases or tables has been sharded into different servers. See this diagram below

Choosing the definition of hashing can surely impact the write speed of query. If hashing is complex, it will affect the performance. Lets try to use some complex hashing algorithm in the next section.

Latest Articles

How one space can create a nightmare for you…

As you know that MySQL 5.7 is going to be in its end of life this month (when I wrote this article) and many businesses are upgrading to 8 ( except few ones, believe me its true) . Many cloud vendors are providing extra support and time to their customer so that they can move…

tpc-c Benchmarking of MySQL 8.0.44

This setup is on my local machine for mac with 8.0.44. M2 chip, memory 16G High level Results : total transaction executed in 10 min – 247298Transaction per minute – 24729Time taken on average for every case – 0-26ms ( you have to do this calculation , testing wont tell you this. I have done…

What type of databases are well suited for LLM ? part 1

We all know vector data type but do you know its scale os usage, lets see from basics.

You must be logged in to post a comment.